A few months ago, I had to understand in detail how Container Network Interface (CNI) is implemented to, well, simply get a chaos testing solution working on a bare-metal installation of Kubernetes.

At that time, I found a few resources that helped me understand how this was implemented, mainly Kubernetes’ official documentation on the topic, and the official CNI specification. And yes, this specification simply consists of a Markdown document, which I needed to invest a consequent amount of energy to digest and process.

I did not, however, find a step-by-step guide explaining how a CNI is practically working: is it running as a daemon? does it communicate on a socket? where are its configuration files?

As it turned out, the answer to these 3 questions is not binary: a typical CNI is both a binary and a daemon, communication happens over a socket and over another (unexpected, more on that later) channel, and its configuration files are stored in multiple locations!

What is a CNI after all?

A CNI is a Network Plugin, installed and configured by the cluster administrator. It receives instructions from Kubernetes to set up the network interface(s) and IP address for the pods.

It is quite important already to highlight that a CNI plugin is an executable, as specified in the CNI specification.

How does the CNI plugin know which interface type to configure, which IP address to set, etc? It receives instructions from Kubernetes and more specifically from the kubelet daemon running on the worker and master nodes, and these instructions are sent with/through:

-

Environment variables:

CNI_COMMAND,CNI_NETNS,CNI_CONTAINERID, and more commands. (exhaustive list here) -

stdin, in the form of a JSON file describing the network configuration for our container.

To better understand what happens when a new pod is created, I put together the following sequence diagram:

Sequence diagram highlighting the exchanges between the Container Runtime Interface (CRI) and the Container Networking Interface (CNI)

We have several steps happening:

-

kubeletsends a command (envvariables) and the network configuration (json) for a pod to the CNI executable -

If the CNI executable is a simple CNI plugin (e.g. the

bridgeplugin), the configuration is directly applied to the pod network namespace (netns, I’ll explain that shortly) -

If the CNI is more advanced (e.g. the Calico CNI), then the CNI executable will most probably contact its CNI daemon (through a socket call), where more advanced logic is happening. This “advanced” CNI daemon then configures the network interface for our pod.

netns — network namespace?

Any idea how pods get their network interfaces and IP addresses? And how pods are isolated from the node (server) on which they run?

This is achieved through a Linux functionality called namespace: “Linux Namespaces are a feature of the Linux kernel that partitions kernel resources […]” Namespaces are extensively used when it comes to containerization, to partition the network of a Linux host, the process IDs, the mount paths, etc. If you want to learn more about that, then this article is a good read

In our case, we simply need to understand that a pod is associated with a network namespace (netns), and that the CNI, knowing this network namespace, can for example attach a network interface and configure IP addresses for our pod. It will do so with commands that will look like this:

# given $netns the the network namespace id. e.g. netns=46165437

# 1st: we create a virtual interface

ip link add name toto_if type ipip local 10.20.30.46 remote 10.30.30.1

# 2nd, we put this interface in the network namespace of our pod

ip link set dev toto_if netns $netns

# 3rd, we can for example change the ip address or routing parameters:

nsenter -t $netns --network ip addr add 1.2.3.4/30 dev toto_if

nsenter -t $netns --network ip route add default via 1.2.3.5

ℹ️ If you find this interesting, please note that working with the network namespace of your pod can greatly help you debug your networking problems: you will be able to execute any executable running on your host but restricted to the network namespace of your pod. Concretely, you could do:

nsenter -t $netns --network tcpdump -i any icmp

To only debug/intercept the traffic that your pod sees. You could even use this command if your pod doesn’t include the tcpdump executable. More info on this debugging technique is in this article.

Down the rabbit hole: intercepting calls to a CNI plugin

Let’s recapitulate, we know that:

-

the CNI is an executable

-

the CNI is called by

kubelet(with the Container Runtime Interface (CRI), e.g.dockershim,podman, etc., but let’s ignore that for our discussion) -

the CNI receives instructions through environment variables and a

jsonfile

If you have a CNI that doesn’t behave properly (I had an issue with Multus not correctly handling its sub-plugins, which I documented here, for which I submitted a fix that was merged in August 2020), having the possibility to watch/intercept the exchanges between the Container Runtime (CRI) and the Network Plugin (CNI) will become extremely handy.

For that reason, I spent some time creating an interception script, that you can simply install in place of the real CNI executable. This script will intercept and log the environment variables, as well as the 3 standards file descriptors ( stdin, stdout, and stderr ), but this won’t prevent the real CNI from doing its job and attaching the correct network interfaces for our pods.

Concretely, to intercept calls to e.g. the calico CNI, you need to:

-

rename the real

calicoexecutable toreal_calicoThis executable is most often located in the/opt/cni/bin/directory -

save the following script as

/opt/cni/bin/calico( don’t forget to make the script executable 😉) -

Go in the

/tmp/cni_loggingdirectory and watch as files are being created for all CRI/CNI exchanges 🔍

#!/bin/bash

# Auther Clément Nussbaumer <[email protected]>, Aug 2020

#

# CNI interception script: permits to do live debugging of CNI calls.

# Usage: rename the real cni binary file with by prepending the orginal binary name with real_

# E.g. for multus, real_multus. Now put this script in place the binary:

# Concretely, name it `multus` if you want to intercept multus calls.

cni=$(echo $0 | awk '{split($0,r,"/"); print r[length(r)]}')

echo 'intercepted '$cni' cni with command: ' $CNI_COMMAND ' and caller: ' $(ps -o comm= $PPID) | logger -t cni

stdin="$([[ -p /dev/stdin ]] && cat -)"

dir=/tmp/cni_logging;

if [ ! -d $dir ]; then

mkdir $dir

fi

current_time=$(date "+%Y.%m.%d-%H.%M.%S.")

current_millis=$(($(date +%N) / 1000))

if [[ $CNI_COMMAND = 'VERSION' ]]; then

file_name=/dev/zero

else

file_name=$dir/$current_time$current_millis'-'$cni'-'$CNI_COMMAND

fi

env | grep -e 'CNI_' > $file_name'_env'

# source of this clever snippet of code: https://stackoverflow.com/a/41069638

# if you want to understand how this "magic" work, read this: https://wiki.bash-hackers.org/howto/redirection_tutorial

: catch STDOUT STDERR cmd args..

catch()

{

eval "$({

__2="$(

{ __1="$("${@:3}")"; } 2>&1;

ret=$?;

printf '%q=%q\n' "$1" "$__1" >&2;

exit $ret

)"

ret="$?";

printf '%s=%q\n' "$2" "$__2" >&2;

printf '( exit %q )' "$ret" >&2;

} 2>&1 )";

}

: pipe_stdin

pipe_stdin()

{

(/opt/cni/bin/real_$cni <<EOF

$stdin

EOF

)

}

catch stdout stderr pipe_stdin $@

echo $stdin > $file_name'_stdin'

echo $stdout > $file_name'_stdout'

echo $stderr > $file_name'_stderr'

#printf '%s' "$stdout" | logger -t cni # uncomment if you want to show the output of the CNI call

printf '%s\n' "${stdout}"

printf '%s\n' "$stderr" 1>&2

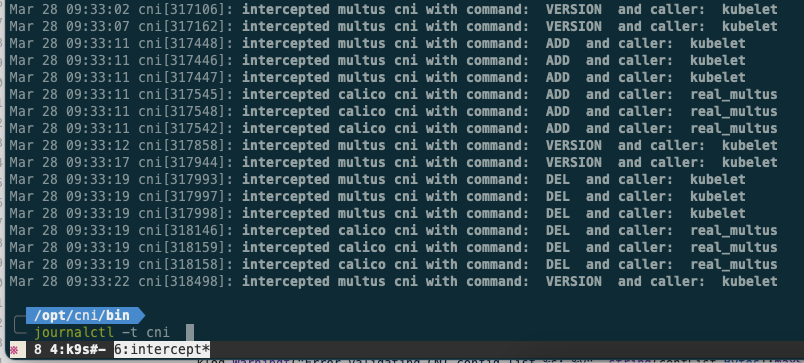

Once this is done, you will see many (depending on the number of pods) entries (tagged with the cni tag) in your journal, each corresponding to a CRI/CNI exchange. You can list them with journalctl -t cni :

The screenshot on the left-hand side corresponds to all the exchanges that happened between the CRI and the CNI. Here we see pod ADDitions and DELetions.

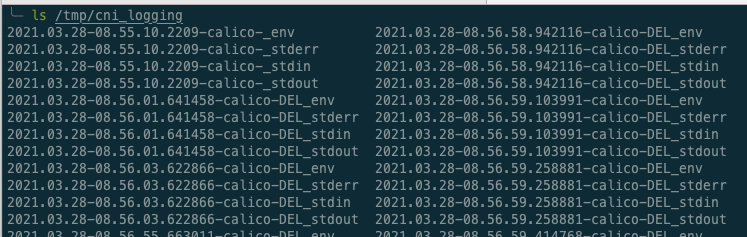

Your /tmp/cni_logging/ directory will also be containing a lot of log files:

For each CRI/CNI exchange, we indeed have the creation of 4 files:

env, stdin, stdout, and stderr.

Finally, for the curious reader that made it here 😜, an example output of our interceptor script can be seen in this Github Gist (not embed here, as it would otherwise make this story even longer).

Having a look at it, you see what happens when Kubernetes/Kubelet creates a pod:

-

The

CNI_COMMANDenvironment variable is set toADD, and the network namespace of the pod is sent through theCNI_NETNSenvironment variable -

A JSON configuration file was sent through

stdinspecifies in which subnet to assign an IPv4 address, as well as other optional parameters (e.g. a port when using theportMappingfunctionality) -

An answer (from the CNI), sent through

stdout, containing the assigned IPv4 address as well as the name of the newly created network interface

We have up until now ignored the way Kubelet and the Container Runtime Interface choose which CNI is to be used. When the container runtime is Docker (and the CRI dockershim), the CRI scans the /etc/cni/net.d/ folder, and chooses the first (in alphabetical order) .conf file (e.g. 00-multus.conf). You can read more about this in the dockershim code: K8s/dockershim/network/cni/cni.go::getDefaultCNINetwork

You hopefully now have a concrete understanding of the way CNI (i.e. Network Plugins) are implemented and used within Kubernetes. If you have question or comments, please contact me, I’d be happy to clarify parts of this story.